Tesla’s controversially named Full Self-Driving beta is officially rolling out to more beta testers on the road. Drivers who purchased the $10,000 add-on are eligible to receive the update, so as long as they also achieve a perfect Tesla-ranked “safety score.”

Originally, the feature was to be deployed to eligible drivers starting late last week, however, certain “last minute concerns” halted the rollout of the software, according to CEO Elon Musk. The delay didn’t last long, though, as the short pause was ended on Monday and eligible drivers began receiving the over-the-air update, which enabled the use of the highly-coveted FSD Beta.

In order for a vehicle to be eligible, drivers would have needed to purchase the feature outright or subscribe to it for $199 per month, and have proved themselves to be responsible drivers to Tesla. The latter is done by having the vehicle rank how safely it is being driven and provide the driver with a “safety score” ranging between 0 and 100.

Tesla’s safety score has become a bit of a game for owners to prove just how high they can get their ranking. Points can be deducted for hard braking, aggressive turning, unsafe following, forward collision warnings, and forced autopilot disengagements. In all, only around 1,000 drivers had a perfect safety score according to Musk.

Eventually, Musk says that the beta will be available to those with scores of 99 and below.

Feedback on the beta still seems to be mixed, however. While it falls under great praise by some, others note that the performance is very inconsistent and less predictable than Tesla’s Autopilot system. Some criticism of the system even speaks to the FSD beta’s early mantra of potentially doing “the wrong thing at the worst time.”

“First drive on 10.2 and it’s insanely impressive on how well it works, but holy hell does it struggle on every drive in some scenarios and is way less predictable on when it will fail compared to normal Autopilot,” said Reddit user Kev1000000. “With regular AP, it was relatively easy to fall into complacency since you pretty much know when it’s going to fail. FSD Beta can randomly do dumb things that will kill you when you’re not predicting it.”

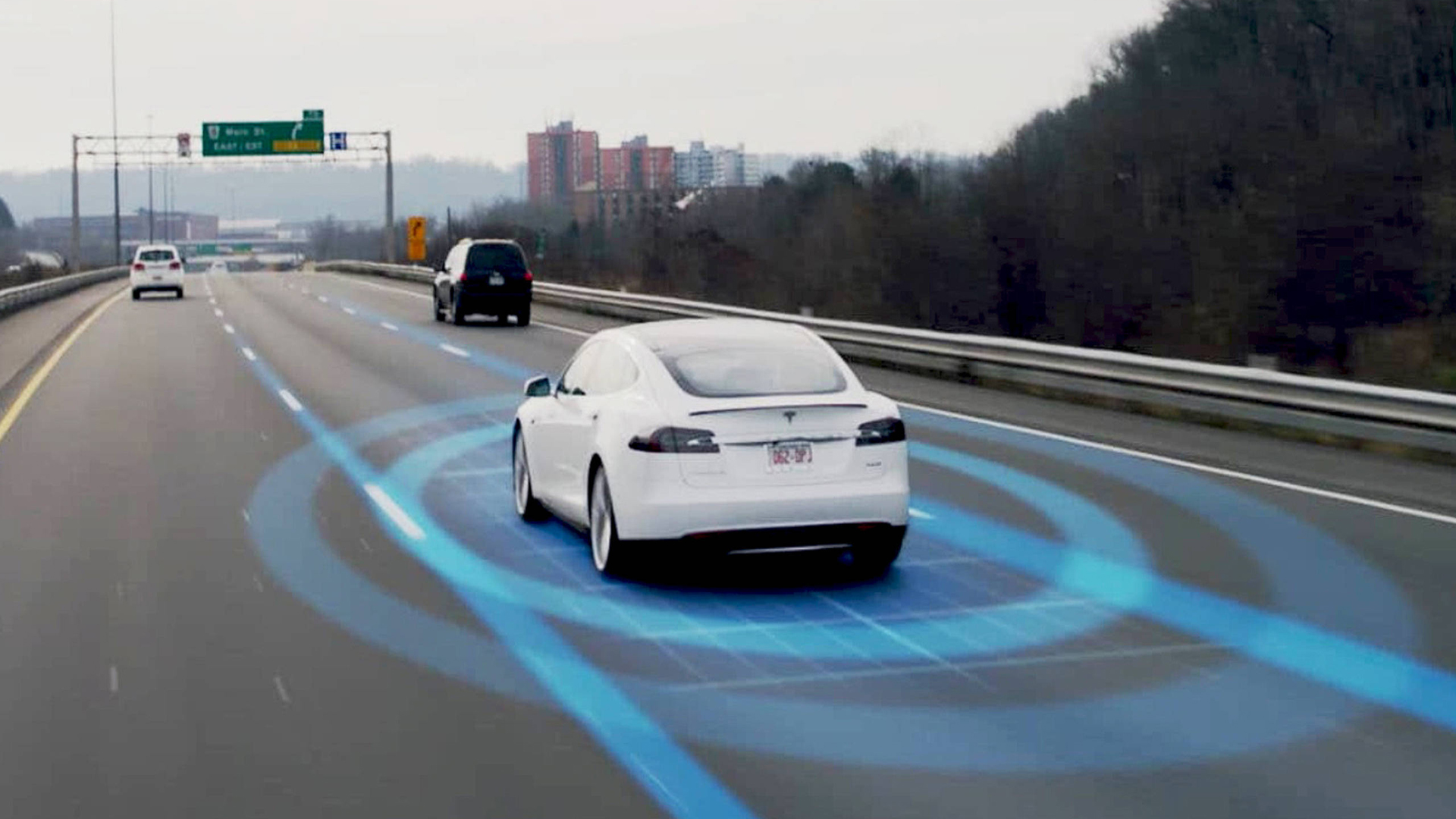

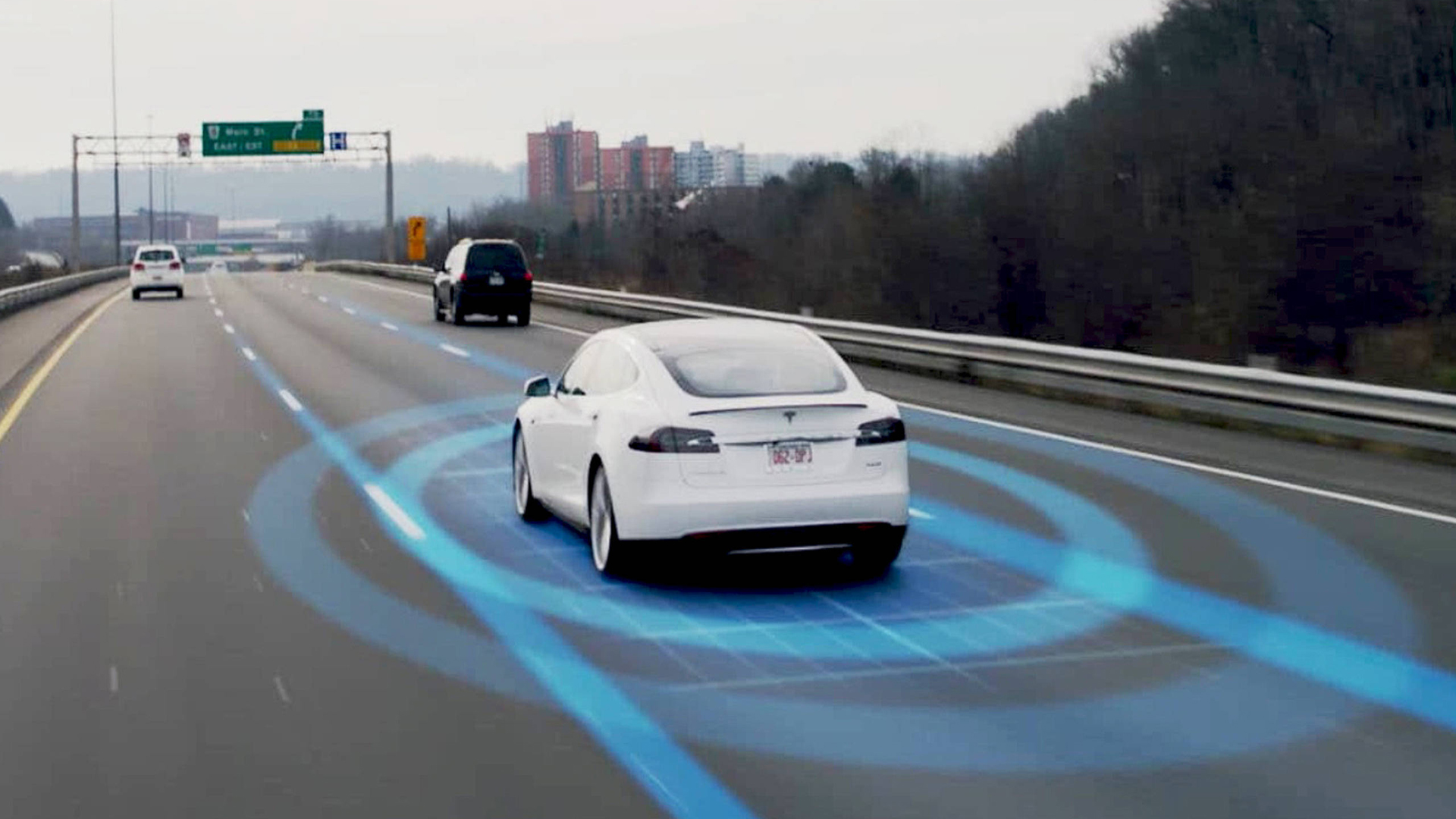

It’s important to remember that FSD beta, despite its name, is not an autonomous driving system. Tesla has previously told California regulators that FSD Beta fit the SAE definition of Level 2 partial autonomy, meaning that the person behind the wheel is still responsible for controlling the vehicle.

The head of the National Transportation Safety Board, Jennifer Homendy, criticized Tesla earlier this year, calling the feature’s name “misleading and irresponsible,” and noting that Tesla had some “basic safety issues” that needed to be addressed before the feature is expanded to city streets and other areas.

Fortunately, it seems that Tesla has started to crack down on some safety concerns. For starters, it has finally begun to take driver monitoring seriously using the built-in cabin camera in the Model 3 and Y. According to a user on Twitter, under some circumstances, the system will even lock a user out of the feature if it detects that they are using their cell phone.

Got a tip or question for the author? Contact them directly: rob@thedrive.com