Welcome back to yet another edition of Speed Lines, The Drive’s morning roundup of the latest news in autos, tech and mobility. Conveniently, we’re going heavy on all three of those things today.

Let’s Talk About How The NTSB Slammed Tesla

Yesterday, the National Transportation Safety Board released its findings over a fatal 2018 California crash where a driver died after his Tesla Model X crashed into a concrete barrier. The driver, Apple employee Walter Huang, was using Tesla’s Autopilot system at the time; for the last nearly 20 minutes of his fateful trip, he reportedly did not apply any torque to the steering wheel, and investigators say he was likely playing a video game with both hands off the wheel when the wreck happened.

You can read our news story on the report here. But it’s worth analyzing some lessons from this ordeal that are likely to affect the implementation of semi-autonomous driving technology moving forward.

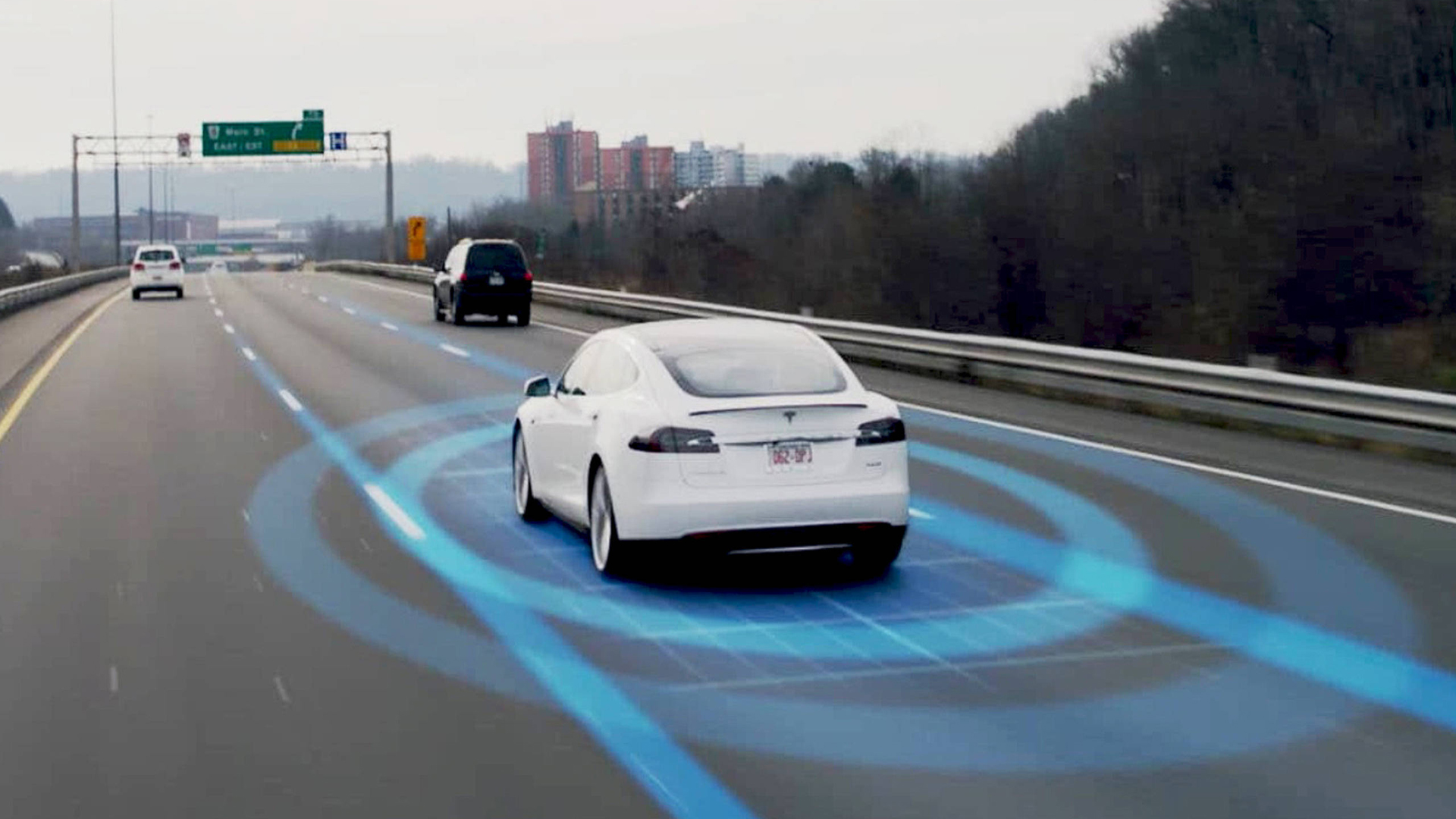

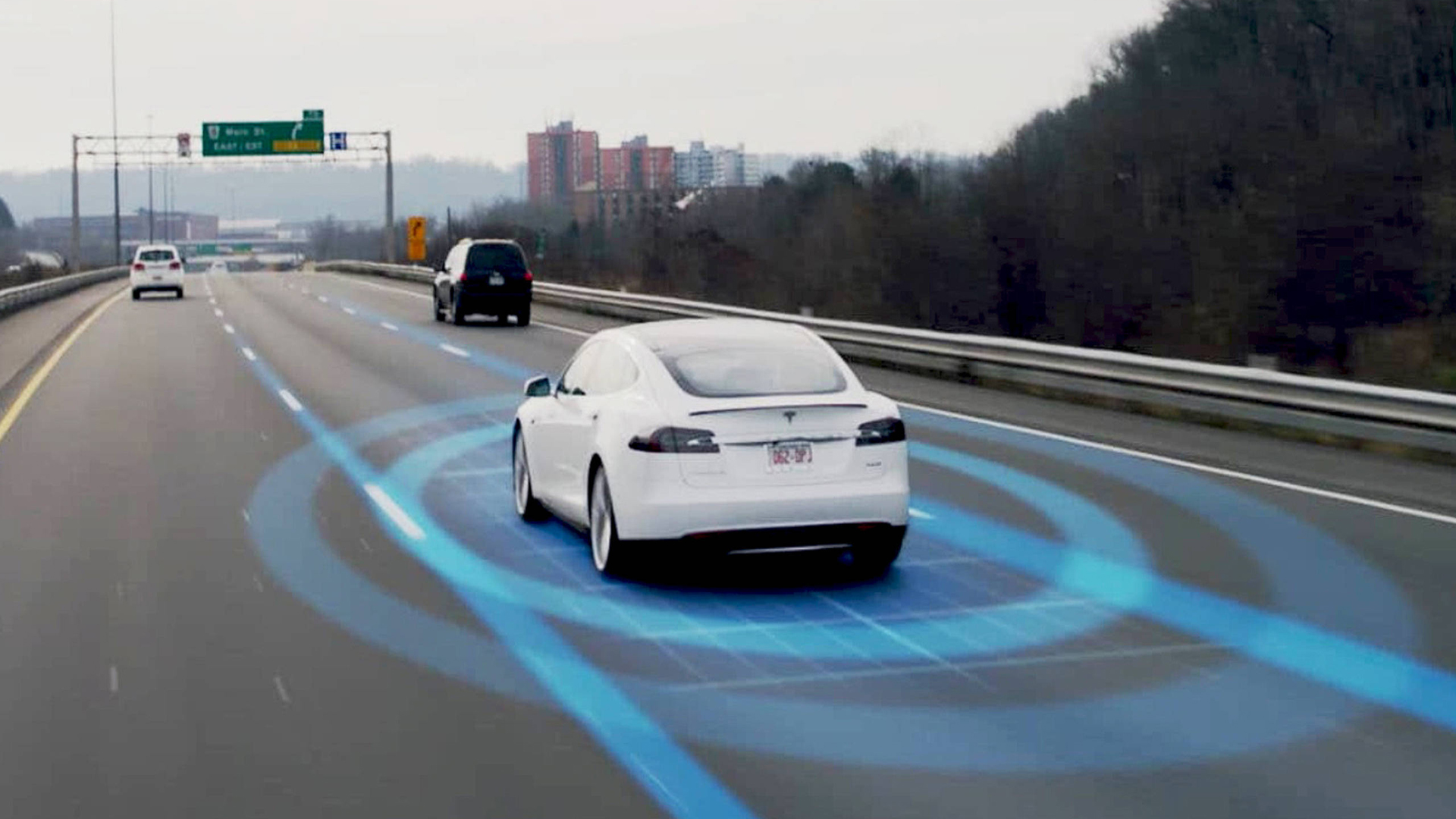

Notice that I said “semi-autonomous driving technology” and not “self-driving cars,” because as of right now, in 2020 and probably for many years after that, that’s what Tesla’s Autopilot is. It’s what Volvo’s Pilot Assist is, it’s what Cadillac’s Super Cruise is, and it’s what all of those systems are.

NTSB Chairman Robert Sumwalt pulled no punches saying so, emphasis mine:

“You cannot buy a self-driving car today,” he said. “You don’t own a self-driving car, so don’t pretend you do… This means that when driving in the supposed ‘self-driving’ mode, you can’t read a book, you can’t watch a movie or TV show, you can’t text and you can’t play video games.”

[…] “It’s time to stop enabling drivers in any partially automated vehicle to pretend that they have driverless cars,” he said.

Sumwalt also blasted Tesla for not responding to recommendations it issued in 2017, a year before this crash, to make such systems less prone to inattention and misuse. From the Los Angeles Times‘ report:

Sumwalt pointed out that in 2017, the NTSB recommended automakers design driver-assist systems to prevent driver inattention and misuse. Automakers including Volkswagen, Nissan and BMW reported on their attempts to meet the recommendations, but Tesla never got back to the NTSB.

“Sadly, one manufacturer has ignored us, and that manufacturer is Tesla,” Sumwalt said Tuesday. “We’ve heard nothing; we’re still waiting.”

There’s a lot of blame to go around on this one, from the NTSB’s perspective. That includes Apple. Sumwalt said the iPhone’s “do not disturb while driving” feature does not happen by default, and its existence is little understood by drivers. Then there’s Huang himself, who absolutely should not have been (reportedly) playing a mobile game on his phone, with both hands off the steering wheel.

But would Huang have done that if he was in a car that didn’t have semi-autonomous driving assistance tech? Or a car with a system marketed as “Autopilot” whose capabilities are shown, time and time again, to be misunderstood by drivers? Would he have done that in a car from an automaker that touts eventual Full Self-Driving Capability, even if that’s just an aspirational term for now? I’m going to go out on a limb here and say probably not.

If OEMs are going to keep promoting these driving assistance systems—and all of them are—and if they are to keep laying the groundwork for eventual self-driving cars, far more needs to be done in order to educate drivers about their true limits.

I’d say that’s the big, hot takeaway from this story, and it kind of is, but the NTSB has been sounding this horn for three years now. Has anything changed since then? Not really. These incidents keep happening, and let’s be honest with ourselves: they especially keep happening with one manufacturer in particular. It may, just may, be time for the guy who runs that company to admit he doesn’t know better than everyone else.

Drivers need to educate themselves too, but frankly, I tend to side more with everyday consumers than international companies with armies of engineers, lawyers and well-paid marketing people.

Once more, with feeling: You don’t own a self-driving car. And it’s time for Tesla and other OEMs to start driving that point home a lot harder.

More Aggressive Driver Monitoring Needed

As in the world of aviation, the NTSB cannot make regulations—only recommendations. With cars, that task falls to the National Highway Traffic Safety Administration, and the NTSB’s report also took shots at that agency’s ability to regulate the safety of these new technologies.

From the Wall Street Journal:

The NTSB and NHTSA have differed on Tesla’s Autopilot in the past as the government tries to adjust to the fast-moving world of increased automation in the automobile. Regulators are grappling with how to find the right balance between encouraging potentially lifesaving technology while ensuring the public is safe.

NHTSA has opened 14 investigations into Tesla crashes involving driver-assistance systems as part of a broader review of the technology. Two of those investigations include Tesla vehicles involved in fatal incidents in the past two months.

If the NTSB is to be believed, Tesla needs to do a much better job with driver monitoring systems in its cars—not just steering sensors, but eye and face monitors to ensure a driver isn’t doing something stupid like playing video games behind the wheel. More from that story:

The NTSB faulted the regulator’s investigating arm for not thoroughly assessing the effectiveness of Tesla’s driver-monitoring system, foreseeable misuse and risks of it being used in ways it wasn’t designed to handle. It urged further evaluation of the system.

[…] Tesla Chief Executive Elon Musk has acknowledged that some drivers are overly confident with Autopilot, but he has vigorously defended the system, saying his company’s data shows its vehicles are safer than others.

General Motors Co. has deployed similar technology. But its system includes a camera that monitors a driver’s eye movement to ensure that the driver is paying attention. Tesla has rejected that kind of technology, saying it is ineffective.

The Mountain View crash heightened concern about automation, in part because it came soon after a fatal crash in Tempe, Ariz., involving a test vehicle used by Uber Technologies Inc. to develop autonomous vehicles. In the Uber crash, a safety operator sat at the steering wheel with the job of taking control of the vehicle in case of emergency. That didn’t happen and the Uber vehicle struck and killed a pedestrian.

Since it’s pretty clear we won’t be buying fully autonomous pods within five years anymore, how best to improve driver monitoring may be the big auto safety debate of 2020.

More Coronavirus Impacts, This Time In Japan

As we’ve reported on Speed Lines before, the Wuhan coronavirus outbreak has been extremely disruptive to auto production and the supply chain in China. Now cases are skyrocketing in other parts of Asia. Japan is shaping up to be the next big battleground, reports Automotive News:

Toyota Motor Corp. on Wednesday said that operations at its plants in Japan may be affected by supply chain issues linked to the new coronavirus outbreak in the coming weeks, as the global outbreak gathers pace.

The automaker, which operates 16 vehicle and components sites in Japan, said that it would decide on how to continue operations at its domestic plants from the week of March 9, after keeping output normal through the week of March 2.

Plants may be affected by potential supply disruptions in China as some plants in the epicenter of the virus outbreak remain are unable to produce and transport goods, while some plants remain closed under orders by regional authorities.

“We are receiving parts from China as normal for the moment, but we will assess the situation after the week of March 2,” a Toyota spokeswoman told Reuters.

Japan is a major site of production for the company, accounting for nearly half of the 10.7 million cars it sold globally in 2019.

Toyota Puts $400 Million Into Self-Driving Startup

Speaking of Toyota, and autonomy, Japan’s largest automaker just put a lot of money into Pony.ai, a Silicon Valley autonomy startup with Chinese ties and a growing list of partner companies. Here’s Reuters:

The Silicon Valley-based startup Pony.ai – co-founded by CEO James Peng, a former executive at China’s Baidu, and chief technology officer Lou Tiancheng, a former Google and Baidu engineer – is already testing autonomous vehicles in California, Beijing and Guangzhou.

The firm is focusing on achieving “Level 4”, or fully autonomous standards, in which the car can handle all aspects of driving in most circumstances with no human intervention.

The latest funding will support Pony.ai’s future robotaxi operations and technology development, one of the sources said.

Pony.ai, which has partnerships with automakers Hyundai and GAC, said it will explore “further possibilities on mobility services” with Toyota.

As that story notes, Toyota has been conservative compared to rivals in the autonomy game, but now it’s developing that tech in-house and putting more money into startups as well. Still, Toyota’s playing the long game, saying “it will take decades for cars to drive themselves on roads.” They’d be right!

On Our Radar

Profits jump at PSA ahead of Fiat Chrysler merger (Automotive News)

VW ID3 software problems threaten summer launch in Europe, report says (Automotive News)

Panasonic to exit solar production at Tesla’s N.Y. plant as partnership frays (Reuters)

Read These To Seem Smart And Interesting

How BTS Filmed a ‘Top Secret’ Video in Grand Central Terminal (NY Times)

Univision Sells to Group Led by Ex-Viacom Executive for Less Than $10 Billion (WSJ)

Your Turn

How do you keep this from happening again?

As I said, I think there’s plenty of blame to go around here, including, yes, the driver. But what can OEMs, drivers, dealers and everyone else involved in the furtherance of autonomy do to prevent these mishaps in the short term?