Even the lowest levels of car autonomy battle two object-recognition-ogres: How to properly detect and classify any and all objects that may stand, walk, or drive in the way of the car. And how to do that without breaking the bank, both from a production cost aspect, and from a power budget view. Some cars famously have problems with trucks, especially firetrucks. Most aspiring autonomous cars find pedestrians and bicyclists a challenge, if they find them at all. Solutions requiring the compute power of a small data center have a hard time reaching affordably-priced volume segments. Their huge power draw threatens to suck scarce miles from the battery.

What the world needs are super-reliable, low-cost, low-power, minimum-hardware solutions, and a joint project between automotive chip-monster Renesas and the vision-processing software house Stradvision promises just that. It also puts special emphasis on “detecting so-called vulnerable road users (VRUs) such as pedestrians and cyclists.”

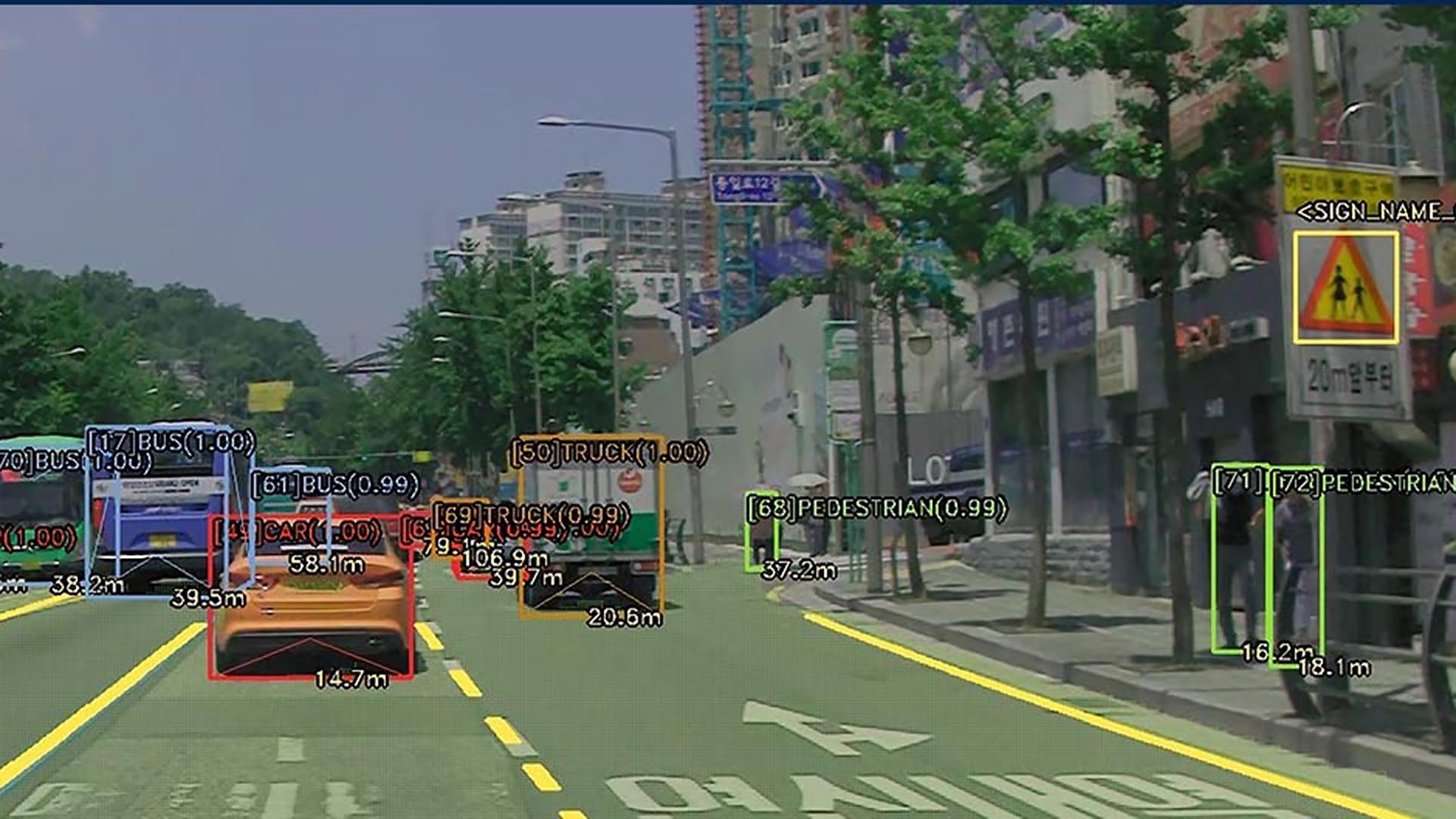

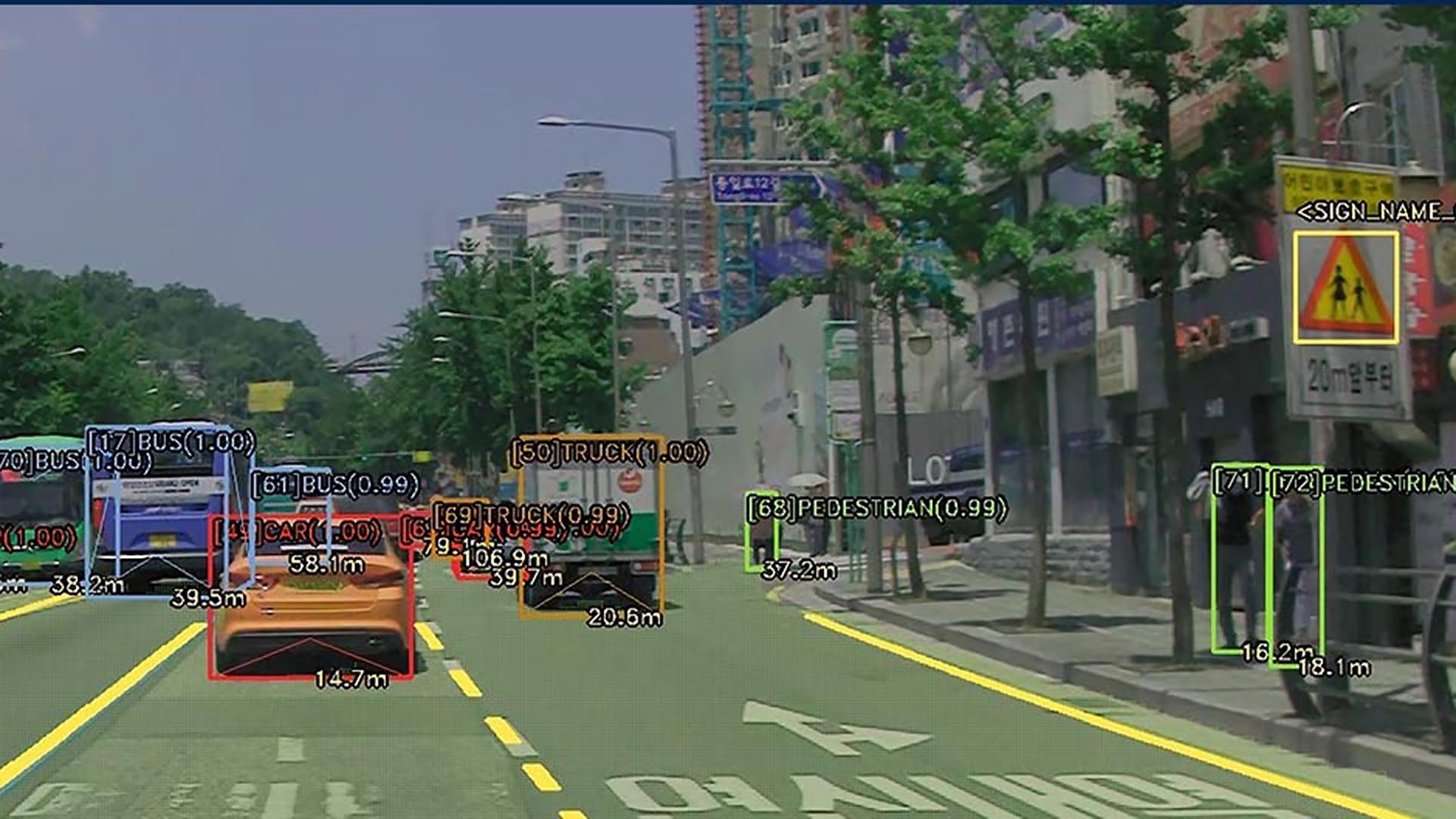

StradVision’s deep learning–based object recognition software runs on Renesas’ R-Car V3H and R-Car V3M automotive system-on-chip (SoC) offerings, which are promising to become standard fare in the auto industry. The V3H is (low-)powered by a frugal 4core ARM Cortex, running at a sedate 1GHz. During times of lesser demand, the computelet is assisted by a dual-core ARM running at even lower cycles. Part of the SOC is a likewise low-power neural-net image processing and computer vision unit. StradVision’s software stack has been optimized for that platform. Paired with inexpensive cameras, the solution promises simultaneous vehicle, person, lane and traffic sign recognition at a rate of 25 frames per second, even under low-light conditions, or when objects are partially hidden by other objects.

Renesas plans to supply the new joint deep learning solution, including software and development support from StradVision, to developers by early 2020. More here.