MIT Researchers Are Using Virtual Reality to Prevent Drone Collisions

Researchers created a new obstacle navigational system for UAVs.

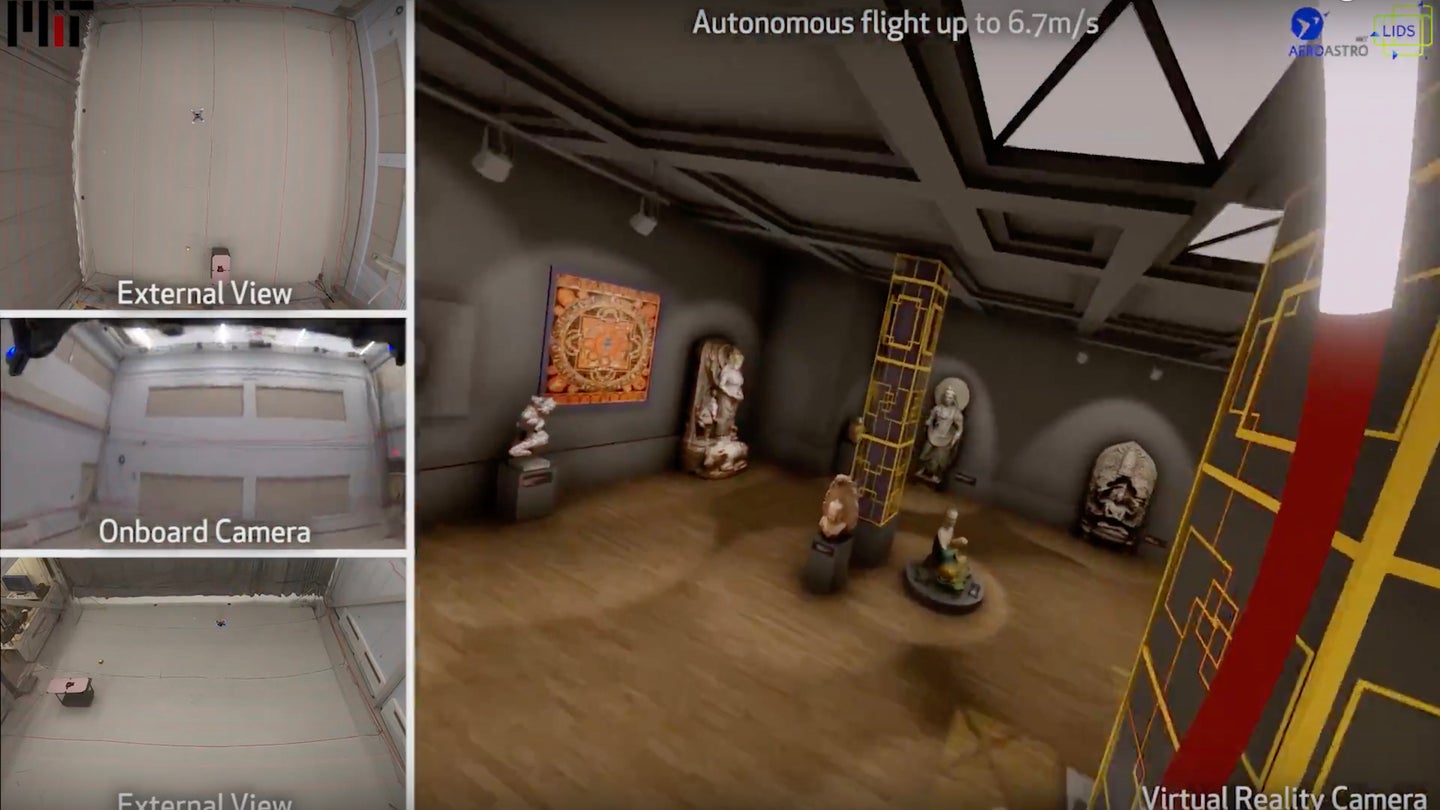

MIT researchers have developed a virtual reality-based system that lets drones navigate rooms while avoiding virtual obstacles.

The “Flight Goggles” system, developed by MIT's Laboratory for Information and Decision Systems along with its Computer Science and Artifical Intelligence Laboratory, reportedly allows the unmanned aerial vehicle to “see” a complete virtual environment and navigate around intangible objects.

“We think this is a game-changer in the development of drone technology, for drones that go fast,” said Sertac Karaman, associate professor of aeronautics and astronautics at MIT. “If anything, the system can make autonomous speed more responsive, faster, and more efficient.”

Members of the development team will present its findings at the Institute of Electrical and Electronics Engineers International Conference on Robotics and Automation next week.

Video footage shows the UAV flying rapidly through what's, in reality, a completely empty room. In virtual reality though, there are several obstacles, and the drone successfully navigates around them. This may not seem exciting to the casual onlooker, but this may help make autonomous drone flight safer and more reliable.

“In the next two or three years, we want to enter a drone racing competition with an autonomous drone, and beat the best human player,” said Karaman. “The moment you want to do high-throughput computing and go fast, even the slightest changes you make to its environment will cause the drone to crash,” said Karaman. “You can’t learn in that environment. If you want to push boundaries on how fast you can go and compute, you need some sort of virtual-reality environment.”

The “Flight Goggles” incorporate motion capture, image rendering and more, with testing taking place at MIT’s new drone facility.

“The drone will be flying in an empty room, but will be ‘hallucinating’ a completely different environment, and will learn in that environment,” Karaman explained.

The virtual images collected by the UAV reportedly occur at 90 frames per second, which is triple the speed that the human eye can capture. It’s the supercomputer within the drone, alongside an inertial measurement unit and camera, that allows the device to do so.

Using those capabilities, the drone reportedly crashed into a virtual window three times out of the 361 times it attempted to fly through the window in total. Karaman joked that the team “didn’t break any actual windows in this process.” speed